See the structural ceiling of neural AI explained using fractals. Learn why bigger models can only approximate truth, not capture the rule.

Many people in the AI industry have been hoping that neural models will keep improving until errors become negligible, and that they'll work just as well on regular patterns as they do on noisy ones.

In many simple cases this does work, which is why LLMs have gained so much traction. But there's good reason to think that with some kinds of complex regularity, neural models will always plateau well below 100% accuracy.

Here's a way to think about it.

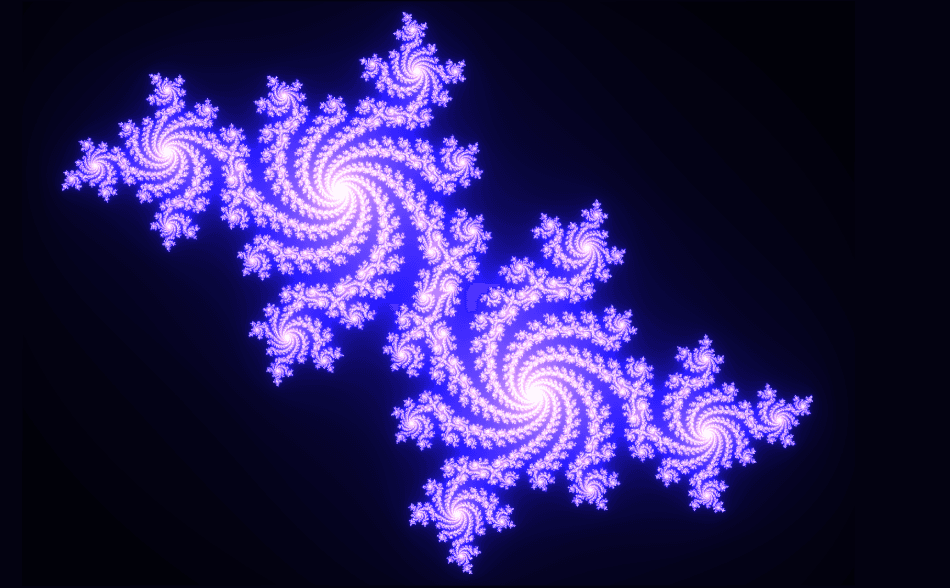

A fractal is a mathematical object with regular structures that repeat to infinity - you can zoom in anywhere and get more detail in full resolution without it ever ending. All sorts of data behaves like a fractal, from mathematical structures to computer algorithms to the branching of species in evolution.

Because of the fractal's regular structure, it's easy to model with symbolic rules. In fact, symbolic rules were used to generate the famous images of fractals you've probably seen.

But what happens if you train a neural model on data with a shape like the overall fractal and then zoom in on a segment?

You see a blurry mess.

The learning process only ever learns to make predictions at the same granularity as the training data. If you didn't feed it data representing a particular region in detail, it can't reconstruct that detail - it can only approximate.

With any specific failure like this, we can always say: let's feed it more data. And bigger models with more data can get better at modelling these regularities. But the limiting factor is that fractal complexity can produce infinite novelty, while neural methods can't train on infinite data with infinite energy.

This is why we're all used to LLMs seeming intelligent but really having only a shallow understanding of things. The concrete detail of the real world is constantly outstripping the approximate representations of neural models.

Symbolic systems don't have this problem. If you've identified the rule that generates a fractal, you can zoom in forever and it stays sharp.

This is the ceiling neural models keep hitting, not a temporary limitation of scale, but a structural one. More data and bigger models push the boundary outward, but the boundary remains. Fractal complexity doesn't compress neatly into approximate representations, and the real world generates fractal complexity constantly.

Symbolic systems don't approximate; they capture the rule and follow it wherever it leads. The problem is that not everything has a clean symbolic rule, which is why neural models exist in the first place. The deeper point is that the two approaches have genuinely different failure modes, and a system built on only one of them will always have a ceiling.

The question isn't which approach wins. It's whether we're honest about what each one can't do.