Why do LLMs fail on complex logic? Discover the structural limits of deep learning and how the neurosymbolic principle provides explainable, consistent, and trustworthy AI for regulated industries.

The key lesson from understanding the strengths and weaknesses of neural and symbolic AI is what we call the neurosymbolic principle: there are two kinds of data, and neural and symbolic methods are suited for different tasks (Read the break down in another blog: two kinds of data, two kinds of AI). Therefore, AI systems should be grounded in a sensible division of labour.

This is easier said than done. Many domains have data which contains regularity and noise at the same time, intermingled with each other. A good neurosymbolic system needs to know how to separate the two autonomously to treat them in the right way.

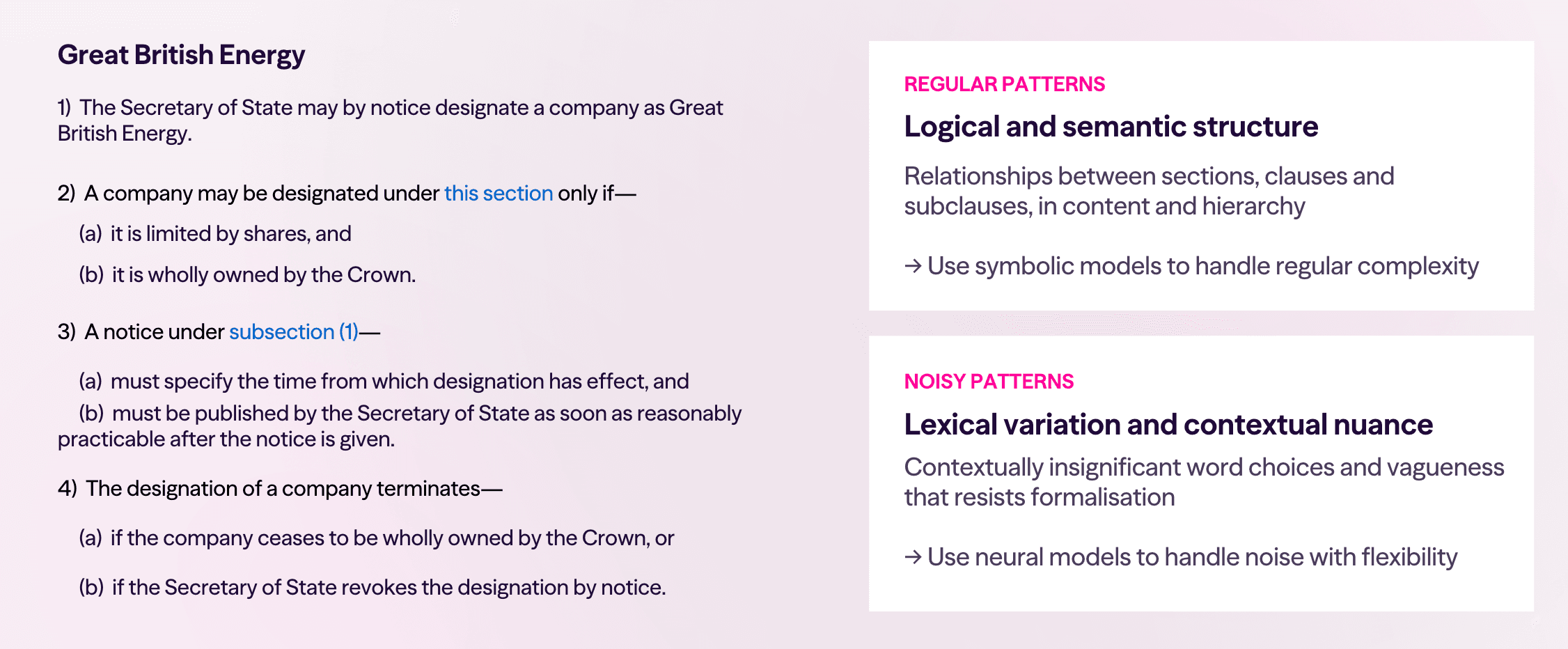

Take UK legislation as an example.

There are clear structural regularities in legal text: sections, clauses and subclauses increase in fixed, predictable sequences; different indentation levels express rigid hierarchical relationships; links between sections encode logical connections. These are all patterns that can be easily represented with symbolic rules, meaning you don't have to settle for the approximations and hallucinations of neural models.

On the flip side, there are properties of language that feature noise. It might not matter whether the text says "the time from which the designation is effective" or "the time from which the designation has effect". Where that interchangeability exists, neural models can screen out this semantic noise much more efficiently than symbolic ones. And if a system ever needs to interpret something vague like "as soon as reasonably practicable", the nuance involved might best be handled by the high-dimensional representations of a neural model.

The insight is that we're no longer saying we should perfect neural models to handle every task, nor that we should represent all data entirely symbolically. Instead: identify where data contains regular structure, because then you can use symbolic models. And where you can use symbolic models, you can build trust.

This is because symbolic models are inherently explainable - the rules they formulate can be inspected, validated and controlled.

They're inherently consistent, because it's in the nature of rule-following that the same rules are followed the same way every time.

And they're inherently accurate - so long as you've correctly identified that the data is regular and your model fits, your predictions are guaranteed.

The division of labour isn't about choosing one approach over the other. It's about using each where it belongs.

It's actually an open secret in the AI industry today that the neurosymbolic approach is the way to go.

If you look at how platforms are being developed by the big model providers, they're augmenting LLMs with capabilities like web search, code generation, and various abilities to call out to other tools. This is because there's now an unspoken consensus that generative models are not going to be all-purpose systems.

And whenever they call out to other tools, those calls introduce a kind of regular structure, while the tools themselves are often symbolic - as is the case with most code.

But rather than accepting the neurosymbolic paradigm in a hushed and haphazard way, we ought to embrace it proactively, so that we can develop coherent research programmes for distinguishing regularity from noise where it matters.

This is especially vital at the frontier, because this is the only vision now which can still sustain progress towards general intelligence.

When we look at legislation, we see clearly visible differences between regular patterns and noisy ones. But one of the key tricks in human intelligence is that we're able to take data that looks thoroughly noisy and - with time and analysis - find useful regularities in it.

That's fundamentally what the scientific process is. As we experience the world, we get an onslaught of noisy information through our senses, but we develop science and technology by finding regularities in that information and formulating them as theories.

This combination of noisy perception and rule-based understanding in humans is the paradigm example of neurosymbolic intelligence.

And the exciting prospect of automating scientific discovery is the logical endpoint of developing trustworthy neurosymbolic models today. If we can build systems that identify structure where it exists, represent it symbolically, and handle genuine noise with neural methods - we're not just building more reliable AI. We're building AI that reasons the way humans do when they're doing science.

That's where this is going. And it starts with being honest about what each tool is for.