AI benchmarks were meant to measure progress — but are they actually stalling it? We break down how chasing scores can derail real advances in intelligence, and what the ARC Prize gets right.

The most overused acronym in machine learning has got to be ‘SOTA’ (state of the art). How many hundreds or thousands of papers have branded a system as SOTA by some measure, not just as a show of incremental progress but in anticipation of something truly useful and exciting, only for it to have no real world impact? Someone says ‘SOTA’ and I say ‘so what?'

The trend for reporting SOTA results is a clear sign that our industry has not absorbed Goodhart’s Law: if you let a measure (like a benchmark) become a target in itself, it will cease to be a good measure. The way this has transpired in AI is that we've created lots of benchmarks to help us get a feel for model capabilities, then researchers and engineers have optimised for high benchmark scores, and the effect has been to detract from long-term progress towards real intelligence. This is a common pattern: reward hospitals for reducing length of stay and you’ll soon find patients being discharged before they’re ready, while the original intention of improved care goes out the window.

So enters the ARC Prize, the $1 million prize fund which recently completed its second annual run looking to be the measure of AGI by fixing what we’re all getting wrong about evaluations. In fact, the ARC Prize is one of the few things keeping us talking about AGI these days, as the idea of general intelligence is starting to take a backseat to trustworthy AI with tangible ROI. But as we take stock of this year’s results, I want to ask: has the ARC Prize proved itself to be a much needed innovation or is it just another victim of Goodhart’s Law?

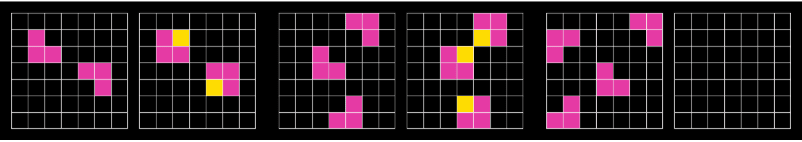

The ARC dataset was presented in its first version by François Chollet in his 2019 paper, ‘On the Measure of Intelligence’. If you don’t already know what ARC tasks look like, you’ll understand them quickly, as they’re heavily inspired by classic IQ tests. Here’s one simple example:

Each pair of grids shows an input and an output, though the output for the third pair is empty. The task is to infer the relationship for the two completed pairs and then give the output for the last pair using the same relationship. In this case, you would copy all the pink squares over and add a yellow square wherever it would turn an L-shape into a larger 2x2 square.

Although Chollet doesn’t mention Goodhart’s Law by name in his paper, the phenomenon was very much an inspiration, with its effect on AI development being as old as the field itself. Take the classic example of chess. Though it may seem strange now, many researchers in the 1970s used to believe that building a machine that could reliably beat humans at chess would require significant progress towards AGI. This is because, before anyone had actually solved chess with a program, the best we knew was that humans use a wide range of very general cognitive mechanisms to play it, like perception, memory, learning, analysis and so on. But while humans have to use general intelligence for chess because nature didn’t plan for it in our evolution, the moment AI researchers put a target on chess as the thing to build for, much simpler engineering paths opened up, eliminating the need for general capabilities. So, people made chess-playing programs that couldn’t do much else.

This history of proxy targets undercutting real goals has persisted through the decades with one other important feature that’s especially relevant today: for any problem that has multiple solutions where some generalise better than others, often the less general solutions are more expensive to build and run, either because they’re literally cash expensive to use, or because you always have to start from scratch with every new problem. One of the reasons why humans evolved general intelligence is because we’re constrained to be resource efficient, but if you have the option of being very inefficient - such as by using large scale brute force search or hyperscaling data centres to train LLMs - it’ll simplify the engineering problem for you to just pay for an inefficient solution.

As a result, the AI industry is now in a bit of a measurement crisis, with an endless supply of benchmarks that are touted as measures of progress towards AGI while failing to be so, as our collective pockets are deep enough to solve them using naive solutions. For some industries, achieving AGI is irrelevant - with enough data, they'll be automated over the next few years regardless - but there are other industries where data can never be had in a large enough supply and betting on inefficient technology is sure to fail.

In his paper, Chollet diagnoses the underlying problem as a failure to define and measure intelligence properly. While the typical benchmark measures skill, skill is only a byproduct of intelligence which can be achieved by other, simpler means. For Chollet, intelligence itself is about efficient skill acquisition. If you compare two systems that reach an equal skill level, the more intelligent system is the one that’s least complex and uses the fewest resources. So, Chollet argues that getting to AGI requires us to reward the most efficient learning systems, not the most skilful ones. In the end, the most efficient systems will become the most skilful ones, but if you reward skill too early, you won’t get very far.

This might come as a surprise if you’ve seen how results on ARC are reported, as the leaderboard only records raw skill level, and the top prize of $700,000 is offered to whoever can achieve a skill level of 85% - efficiency is not mentioned, and although the submission rules require solutions to run offline with limited resources (thus precluding access to very costly LLMs), this favours efficiency only very weakly, as we will see.

There are a few reasons for this setup. One is that, although Chollet’s paper offers some formal definitions of efficiency, in practice it would be pretty much impossible to measure it fairly across solutions that use different designs. This is a particular problem for a $1 million prize fund in need of very clear judging criteria, as imperfect as they may be.

But a far more important reason is that Chollet believes you actually can construct a benchmark which only measures skill and which is nonetheless resilient to Goodhart’s Law (except in very extreme conditions) so long as the test data is sufficiently unpredictable that performance can’t be bought. This is a subtle point I’ll come to later but, first, I want to look at what the facts show from the top solutions in 2024 and 2025.

In 2024, 1st place went to the ARChitects, a team that scored 53.5%, winning $25k. Their solution fine-tuned an LLM on a large amount of synthetic training data not provided by ARC and, for each test case, synthesised many variants of the examples to fine-tune the model some more before generating the most probable solutions by a method that used yet more data synthesis. The model also had its token set massively reduced so that it couldn’t do much besides represent the ARC puzzles. In effect, the ARChitects built a system that was bespoke to solving ARC tasks, which generated models bespoke to each test case.

Although this doesn’t sound like a very general solution - human perception is not setup to deal with ARC tasks specifically; humans don’t require any training data at all to complete ARC tasks; humans don’t need to be shown a large number of variants of the same ARC task to understand it; and humans don’t change their perceptual apparatus for each task they encounter - it attracted a lot of attention because fine-tuning a model on each test case sounds a lot more dynamic and adaptive than prompting a static LLM, suggesting analogies with how humans adapt to new problems.

These analogies are misleading. We know that the data-hungry model that’s initially fine-tuned on the training data is not itself learning robust, general concepts or it wouldn’t need constant additional fine-tuning. It’s precisely because that method is not general that fine-tuning with extra data whenever possible adds some slight advantage. But that advantage is slight - remember, the solution was incorrect almost half the time - because the quantity of data available at test time is small relative to the model’s needs (which are far greater than a human’s). Fine-tuning at test time adds a little flex to the curve that the base model is fitting but it doesn’t take much for it to snap.

In fact, the interpretation of the approach as showing progress towards more adaptive learning is really just an artefact of ARC being setup to withhold potential training data until test time: each model which is bespoke to a test case is a static LLM with no adaptability, and while you could say that it’s the overall system creating the models which is adapting, that system is not learning any general capabilities because the models it churns out are not integrated or reused, they’re thrown away. The motto here is: give a man a fish and he’ll eat for a day, teach a man to fish and he’ll catch one fish before you have to teach him again.

A better mental model for test time fine-tuning is that it’s a clever way of exploiting the format of the competition to submit as many entries as there are test cases, each one suited to each test case, thus making the most efficient use of an inefficient technique. This year, the ARChitects came 2nd with the method, with a score of 16.5%, the drop being because of an update to the test that increased its difficulty. 1st place went to NVARC, a duo from NVIDIA who submitted a modified version of the ARChitects’ system which achieved 24%.

The NVARC team had a very straightforward strategy: increase the quantity and quality of the synthetic training data. Though this led to an increase in skill, the efficiency was of course unchanged (or possibly worse), so, by Chollet’s definition, the 2025 solution was no more intelligent than in 2024 and no progress has been made towards AGI. Of course, we mustn’t expect every year to bring progress but these results are a clear sign that many people working on ARC are not thinking about efficiency as a path to intelligence.

This is especially true of the big model providers. Although the largest frontier LLMs are ineligible for prizes because they’re too resource intensive, ARC measures them on a dedicated test set separate from the main prize track. In December 2024, OpenAI’s o3 reasoning model scored 87.5%, priced at $456,000 for 100 puzzles (a retail price that doesn’t necessarily reflect the actual cost to OpenAI, which could be higher, bearing in mind that they generated text as long as a stack of 55 Bibles for each puzzle). One natural interpretation of this is that $456,000 is the price you have to pay to buy 1st place through brute force, but the ARC Prize Foundation instead reported it as “a genuine breakthrough, marking a qualitative shift in AI capabilities”. In fairness, this doesn’t hail o3 as a new kind of intelligence specifically, and the report on this year’s results says that AGI is still currently science fiction, but the language is very carefully chosen to make a high score on ARC nonetheless seem like a significant indicator of progress. But is it?

Chollet’s 2019 paper is admirably open-minded about intelligence - he acknowledges that the idea is anthropocentric and must be understood in relation to interests that vary by context, so there isn’t a single, correct definition for us to adhere to. Instead, there are multiple definitions we can use as guides, each one more or less useful for different purposes. I therefore don’t want to dispute Chollet’s definition but rather want to consider whether the ARC Prize lives up to the one he gives.

If you follow the logic of Chollet’s definition of intelligence as efficient skill acquisition, a rigorous evaluation would measure one or both of the following:

These are two perspectives on the same idea of efficiency, where (1) is more about using as little as you can and (2) is about making the most of what you have.

In ideal conditions, measuring (1) would mean looking at different systems that have the same skill level on some task and seeing which has the overall lowest design complexity and resource use (such as in training data and computational cost). Measuring (2) would mean looking at systems that have equal total complexity and resource, and seeing which performs the best.

However, in practical conditions (1) is especially susceptible to the problems of Goodhart’s Law: if a benchmark were to require a specific skill level, engineers would primarily build to that level and it would encourage inefficient solutions. Yet (2) is also problematic because no one can seriously control for the quantity of resources used by different system designs, as it’s too hard to measure. Although this seems to plainly undermine both strategies, and is why ARC doesn’t use efficiency metrics, Chollet sought to bypass these issues by means of a design constraint that would ensure ARC requires a critical efficiency level to solve, regardless of our ability to measure it.

The key to this is something Chollet calls developer-aware generalisation: in contrast to people typically thinking of systems as generalising if they’re able to handle test cases that are outside their training data (what Chollet calls system-centric generalisation), developer-aware generalisation requires systems to handle test cases that are outside the data a developer encounters when building the system. This is by analogy with the fact that human intelligence is not only able to handle problems that individuals haven’t experienced before, but also problems that evolution didn’t prepare the species for (like chess).

Some care is needed on the definitions here, which the paper states a little vaguely. The comparison with nature mustn’t be taken too literally, as developers have an ability that nature doesn’t have, namely imagination, meaning they can guess test cases that are not contained in any formal dataset, and these guesses must be treated as among the examples the developer ‘encounters’. Moreover, Chollet formulated his ideas at a time before language models had become so popular, and their use requires an expansive notion of a ‘developer’: since LLMs are to a large extent knowledge bases, with reams of information about things like human visual perception and IQ test design, a developer using an LLM should be thought of as collaborating with the LLM, using the relevant awareness it has.

By this measure, it’s extremely unlikely that ARC in its 2024 version achieved its design goals. The benchmark specification offered a comprehensive enough description of its inspiration and constraints that a developer could guess the kinds of test cases it would contain without too much difficulty. Additionally, although an LLM prompted without any enhancements fares poorly on the benchmark, the fact that the ARChitects could achieve 53.5% with data synthesis and fine-tuning, while OpenAI could use brute force to get to 87.5%, suggests that LLMs undermine Chollet’s preparations for developer-aware generalisation in ways he didn’t foresee.

The 2025 update to the benchmark tried to correct some of these shortcomings by making the tests harder but it arguably went the wrong way about it. In the era of LLMs, the scope of all possible ARC tasks is simply too narrow to invent test cases that are robust to developer awareness, and the designers responded to this by compromising a principle of test cases being easily solved by an average human, instead favouring test cases that require a good deal more analytical thought by ‘smart’ humans. While this has made brute force solutions less capable through a deepening of the search space, it hasn’t changed the basic character of the test design, so further reductions in the cost of computation could easily see the benchmark bested by inefficient solutions again.

In theory, designing a robust AGI benchmark is actually not that hard. It would look a little like this: someone specifies a protocol for interacting with some training and test datasets, they guarantee that the test will be something an average human can do, and as much training data as a human would need will be provided. And that’s as much as anyone gets to know - no task specifications or data are ever shared. This sounds pointless, of course, first because it unnecessarily formalises the problem of engaging with open-ended tasks in the real world (a system that could do well on such a benchmark wouldn’t need the benchmark to confirm its abilities) and second because the implicit reason why people construct narrower benchmarks with well-defined tasks is to give developers a leg up on the way to AGI with tests that require broad generalisation in just one domain.

But this is where we find the central flaw in ARC’s design: as Chollet observes, if you see a human playing chess, you can reasonably infer that they must be able to do many other things, as humans can only play chess with general thinking skills that can be applied to all sorts. Likewise, for a task as narrow as ARC, if it were truly a test of progress towards AGI, then a system that could perform well on it should be able to perform well on other things, as it doesn’t make sense for a system to be capable of one task it was not prepared for and not more. ARC’s 2025 update therefore missed a huge opportunity to go broader in scope, rather than deeper in complexity, which would have helped address its issues with developer awareness.

In the end, the idea that highly constrained benchmarks are needed to give direction to AI development is more of a hindrance than a help, and ARC does appear to be another victim of Goodhart’s Law, although it’s to be commended for furthering the discussion about how to design a genuine intelligence benchmark. Of course, it’s easier to make these criticisms than to come up with foolproof alternatives - what exactly would it look like for ARC to go broader in scope without making it impossible to solve with highly unpredictable tests? Keep an eye on our future work on the intelligence measurement problem and you might find out.